There's a specific kind of paralysis that comes with starting something on your own.

At work, you're never really alone. There are people thinking about the problem before it reaches you — PMs, analysts, teammates with context you didn't have to generate yourself. You show up to implementation with support you didn't have to ask for. It's invisible until it's gone.

When you start something on your own, you lose all of it at once. As an engineer, the first thing I do is jump to implementation — because that's the part I know how to do. But the missing piece isn't unique to engineers. Anyone who's ever tried to solve a complex problem alone has felt it.

For me, it showed up in the least likely place: a sim racing project I built to fix my own driving. The domain is specific. The lesson isn't.

Rookie Problem, No Rookie Tools

Recently, I've gotten into sim racing — iRacing, a Fanatec direct-drive wheel, load cell brakes, an ultrawide monitor, the whole thing. And like most people who dive in headfirst on hardware, I hit a wall. The equipment was there. The improvement wasn't.

I'd watch hours of content, read about trail braking and throttle application, then get back in the car and drive almost exactly the same. The problem wasn't the advice — it was that nothing connected to what I was specifically doing wrong on track.

Every tool I found was built for the driver who's already fast and wants to get faster. Millisecond lap comparisons. Corner-specific overlays. Useful — but only once you already understand what you're looking at. As a rookie trying to get consistent, they told me nothing.

Left to right: ATLAS Express (multi-channel telemetry), iRacing's scatter plot analyzer (yaw rate, G-forces, tyre temps), Track Titan (corner trace with coaching callouts). Powerful tools — assuming you already know what you're looking at.

So I decided to build something that did.

The Roles This Required

I'm a full stack software and data engineer. I build front-end applications, data pipelines, and everything in between. This project wasn't going to require me to learn a new discipline.

What it required was a team.

In a normal company, data moves through a chain of specialists. Someone instruments the product and collects data. It accumulates in a warehouse. A data analyst processes it and surfaces insights. A PM asks what we're building next and whether we're going in the right direction. Nobody thinks much about this because the handoffs are defined and each person has one job.

On past solo projects, you inherit the entire org chart alone. The roles fight each other. You stop being an analyst to become a platform engineer. You lose momentum every time you context-switch. The PM questions — are we building the right thing? — never get asked because nobody has the headspace to ask them.

This project was different. From day one I had Claude Code open — not one window, multiple. A tmux setup with split panes running in parallel: one agent working on the data pipeline, another on analytics, another building out UI against a data structure we hadn't finished yet. While those were running, I'd drop into another pane and talk to a PM agent about where the gaps were and what direction to move next.

The roles still existed. They just didn't have to be filled one at a time.

What I didn't realize immediately was that this wasn't just AI helping me code faster. The structure that emerged — data engineering feeding analytics, analytics feeding the coach, a separate thread constantly asking whether any of it was heading the right direction — mapped exactly to how real teams are structured. It took until the first coaching log came back for me to see it clearly.

I solved it with Claude Code — but not the way I expected.

The First Coaching Log

I started with the data engineering problem: iRacing records telemetry in IBT files. What's in them, and how do I read them?

I asked Claude Code to figure it out — what the format contained, what libraries existed, what the data looked like. Within an hour I had a working parser. The IBT files turned out to be extraordinarily rich: throttle, brake, steering angle, gear, speed, lateral and longitudinal G-forces, and — unexpectedly — GPS lat/long coordinates for every telemetry point.

I spun up a NeonDB instance and told Claude Code to design a schema for longitudinal tracking — how a driver improves over time, across different tracks and cars. I described the problem and let it architect the solution.

By the end of the first week, I had a telemetry ingestion pipeline and per-session coaching logs.

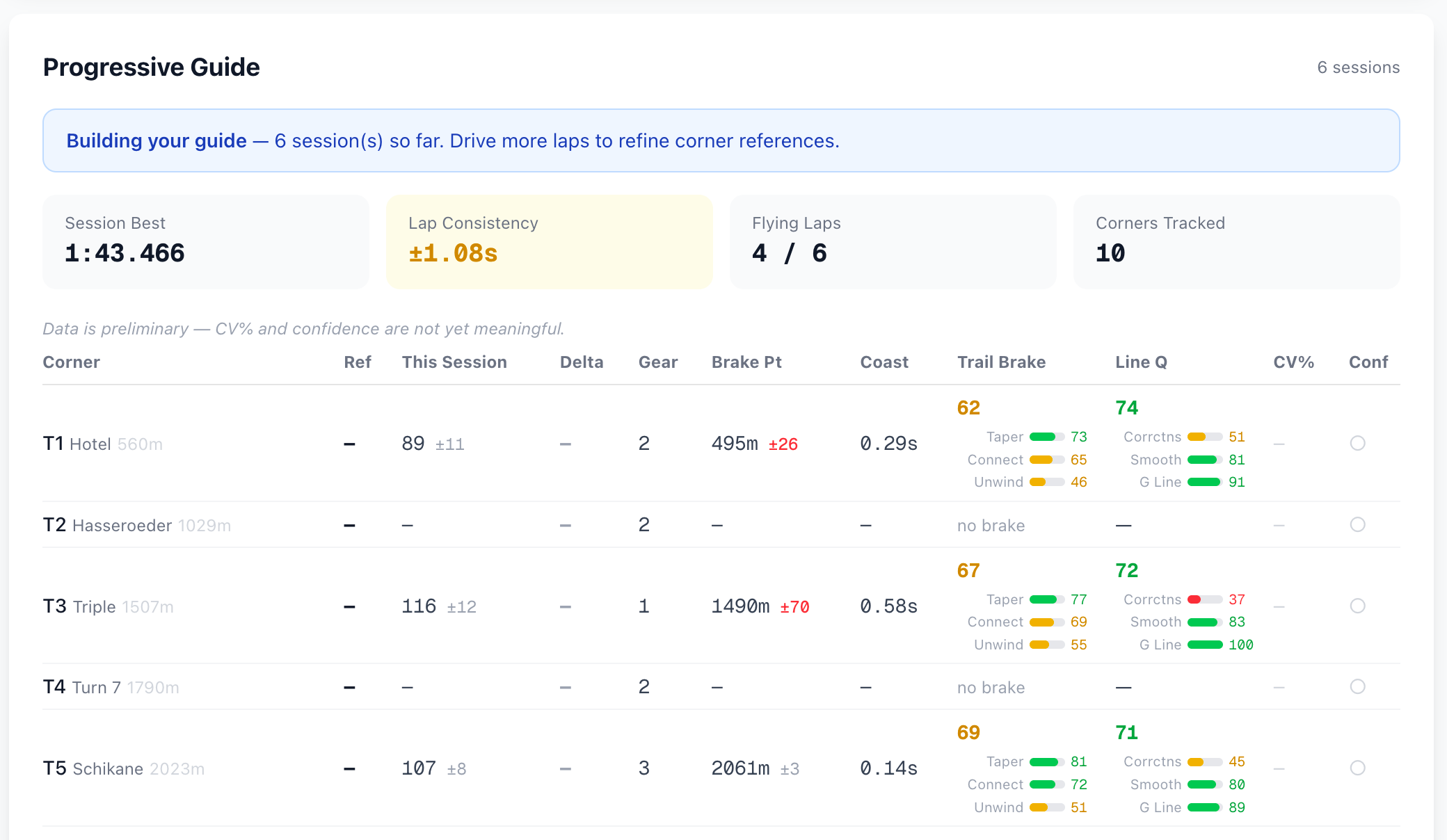

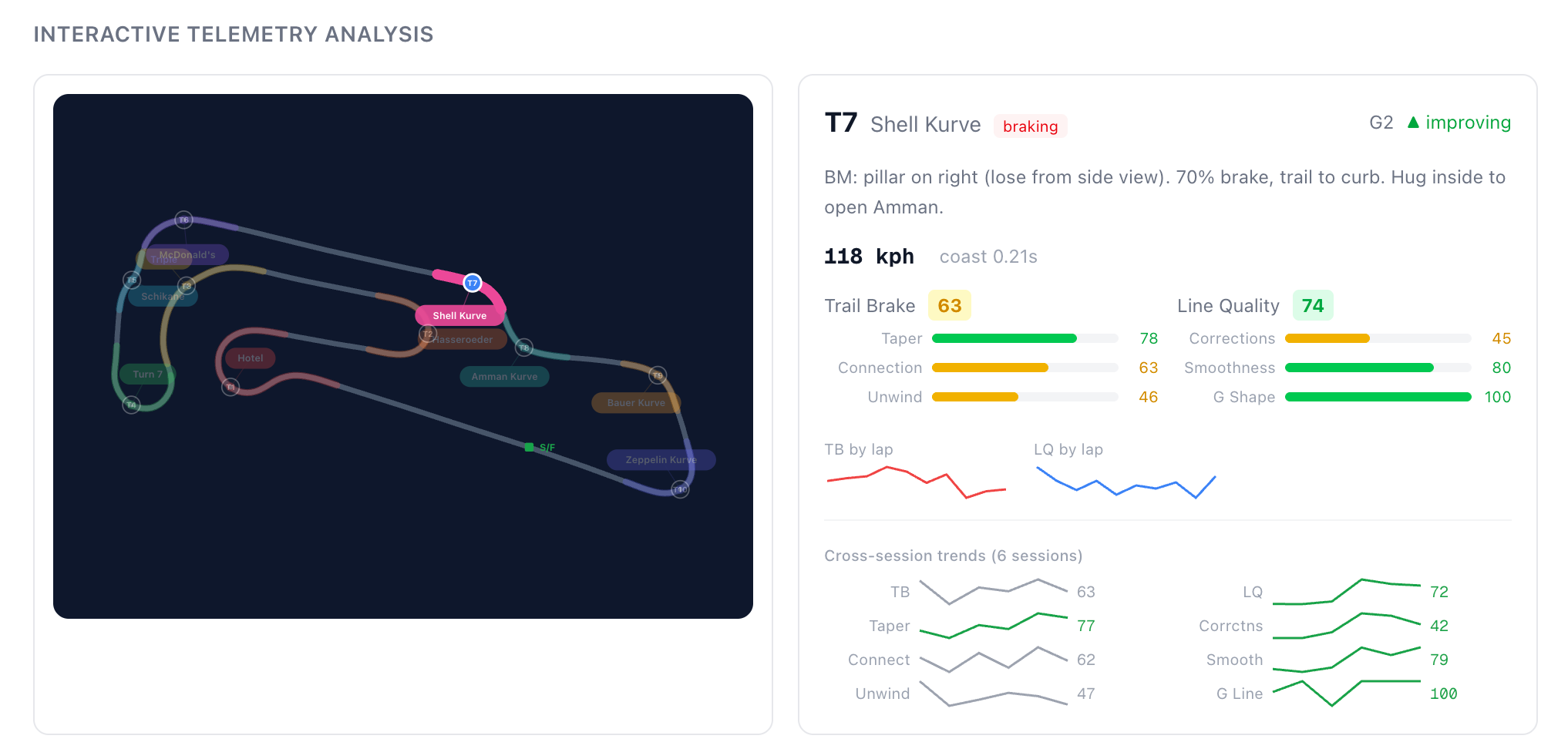

The analyst's output — per-corner consistency scores, brake point variance, trail brake grades, cross-session trends. Data for a coach to work with.

The coaching logs surprised me. They didn't just surface numbers — they read the numbers and told me what to do about them.

Not generic advice. My patterns, my corners, my sessions.

I followed the advice. In the early sessions — while I was still building the pipeline and fixing data capture — half my runs had fewer than 5 laps, constantly resetting after going off track. Once the system was running and I was actually driving with the coaching logs, a session where every flying lap landed within half a second of every other went from unthinkable to normal. Lap standard deviation: from over a second to 0.21s.

The Team That Emerged

What I ended up building maps exactly to a real team.

The Data Engineering Agent owned the pipeline — capturing telemetry, structuring it, loading it into a database downstream agents could consume.

The Analytics Agent derived meaning from that data. It built grading scales for driving fundamentals:

- Braking consistency

- Throttle smoothness

- G-force utilization

- Trail braking application

- Brake-to-throttle handoff

Scored against what's meaningful for a developing driver, not a pro.

The Coaching Agent consumed the analyzed data and produced session coaching logs, trend analysis, and skill-appropriate advice — pulling from scraped racing fundamentals so the guidance could scale as I improved.

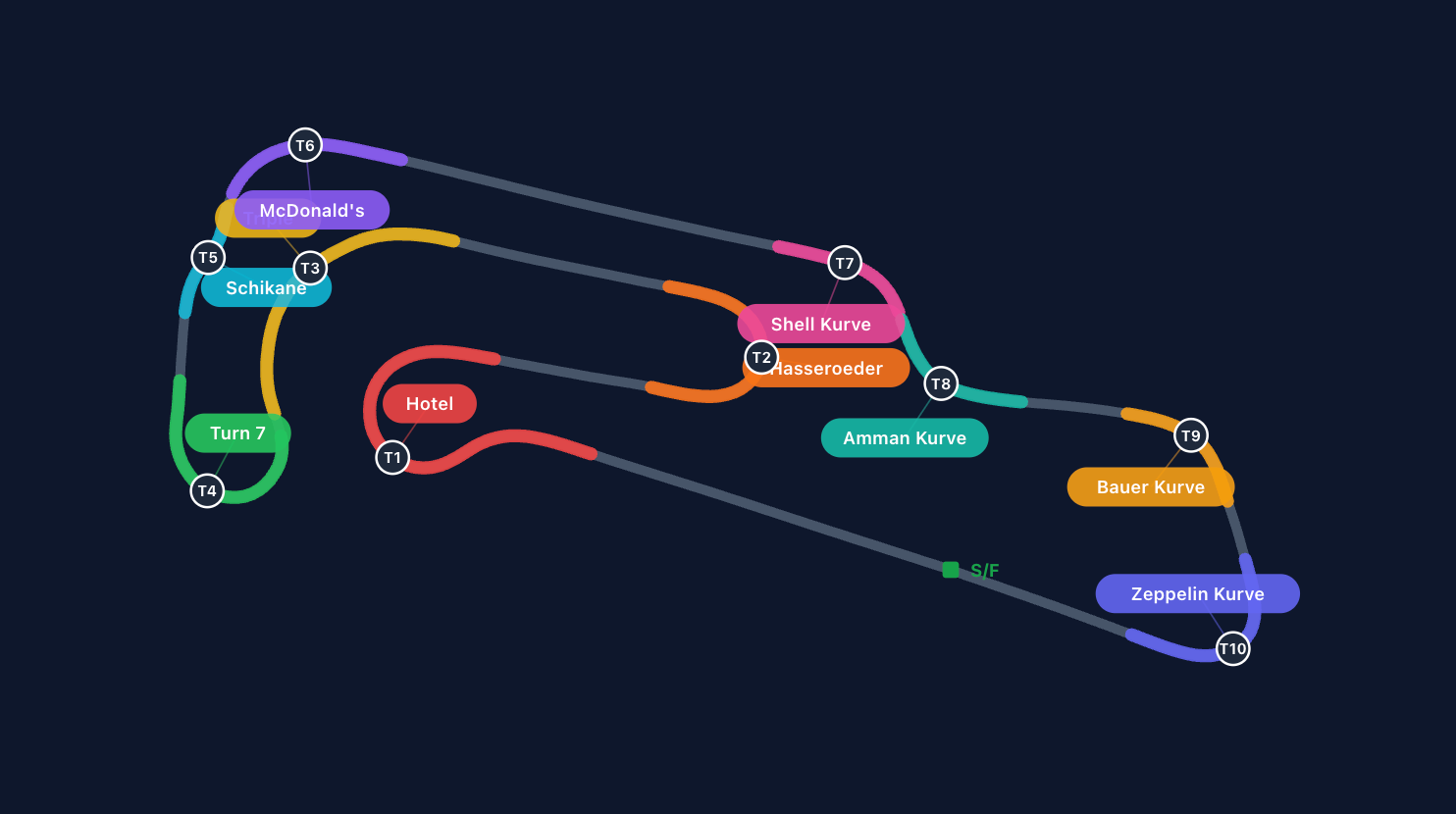

The UX/UI Agent made everything visible: SVG track maps rendered from GPS coordinates, heat lines showing braking and throttle zones, grading dashboards, session history.

The PM Agent was the one I didn't expect to need. Whenever I got too deep in implementation details — which happened constantly — I'd surface for air and ask it a different kind of question: Here's what we've built. Here's where we want to go. What are we missing? Are we going in the right direction? What's the next phase? It would zoom out, assess, and help me decide what to build next.

Good PMs don't just take requirements — they figure out what you actually need, not just what you asked for. The PM agent did the same thing. It pushed back on my assumptions, identified gaps I'd stopped seeing, and helped me reorganize when the project needed to shift direction.

And I sat in the pilot's seat — which, in this project, was occasionally literal. The same person directing the team was also the one strapping into the rig to test whether any of it worked. Not writing code, but doing the work only I could do: knowing the domain, reading the output, and recognizing when something was right or wrong because I'd sat in the car.

There was one more role the org chart doesn't usually show: Test Driver.

To generate meaningful data, I had to get in the sim and drive. Not just drive — drive while actually trying to follow the coaching advice the system was producing. If I ignored the advice, the follow-up sessions were useless. The data would show the same patterns with no signal about whether the coaching worked.

This is the sharpest context switch in the whole project. One moment you're an engineer diagnosing why the analytics agent scored a corner wrong. The next you're strapping into a sim rig, clearing your head, and trying to genuinely improve as a driver — because the quality of your engineering depends on it.

Most products have a clean separation between the team building the thing and the users testing it. Here, they were the same person. The feedback loop only closed if I could fully commit to both roles, and neither one could be phoned in.

That's the Test Driver. But the org chart has one more property worth naming.

The org chart is also a growth map. Any agent role could be handed off to a human — or expanded into a full sub-team. The UI agent becomes a design team. The analytics agent becomes a data practice. This isn't just a pattern for solo developers. It's the same structure real organizations already run — just starting from one person instead of a headcount.

Building the Windows Agent on a Mac

The clearest example of the PM function in practice is how the Windows agent came to exist.

The manual data collection loop was wearing me down. Copy IBT files. Push them up. Switch back to the sim. Come back, sync the files, run the analysis. Repeat. The analyst kept asking for more data. I kept having to become a courier to get it.

I was also thinking about the bigger picture: I don't just race on iRacing. I play Assetto Corsa Competizione, Le Mans Ultimate — naturally, as a user, you move between simulators. A system that only worked if I manually pulled files from one sim wasn't a real product.

I brought this to the PM agent. Not "how do I build this" but "here's the friction I'm hitting and here's what I actually want — what should I do about it?" The answer came back clearly: automate the collection. Build a lightweight background agent that handles it without me.

All major simulators expose telemetry through shared memory files — that's how tools like SimHub and Race Labs already work. I wanted the same: a Windows app that sat in the background, detected when a session started, and logged telemetry automatically.

My only Windows machine is the sim rig itself — dedicated hardware, hooked up to the wheel and pedals, not a development environment. So I did what I always do: described the requirements to Claude Code from my Mac, iterated conversationally, then asked it to write a GitHub Action that built the app using a Windows runner. I'd run it on the sim machine, find the bugs, come back to the Mac, report back, and repeat. Eventually I automated that too — the agent checks for new builds after each GitHub Action run and updates itself, so the iteration loop collapsed to: push a fix, walk over to the sim, and test.

The fact that this worked — building a Windows app without a Windows machine, using CI/CD as a test environment — is only unremarkable if you've already internalized how much the cost of iteration has changed.

What AI Actually Changed

What changed was the size of the team you can field alone.

Every role in this project — data engineering, analytics, coaching logic, UX, product management — was filled by an AI agent. Not simulated. Actually filled. The data engineering agent built a real pipeline. The analytics agent produced grading methodology I couldn't have derived myself. The PM agent asked the questions that kept the project from going sideways.

My role wasn't "engineer who also does PM things." It was the pilot.

"The barrier in development has not gone away, but rather has shifted away from capability and toward expertise."

— Kevin Koenitzer, Earning the Skeptic's Approval

That meant being the person with enough domain breadth to consult for every role on the team — to read the Python coming out of the analytics agent and know if it was right, to look at the coaching log and know if the advice was real, to recognize when the product needed to shift direction before six weeks of work went the wrong way.

That breadth is what made it work. I've shipped React, Python pipelines, bash scripts, GitHub Actions, data warehouses. I can move across all of them and know when the output is right. The one role I'd never held directly was PM — but I'd worked closely enough with good ones to know what it looked like when it was done well. That was enough to invoke it.

The pilot doesn't have to be an engineer. But they need breadth — enough range to validate what each agent produces, enough exposure to good PMs to know what to invoke when the project needs to shift. And they need depth — knowing the problem from the inside, in a way no agent can simulate. I could read the Python, evaluate the coaching logs, and recognize when the product was drifting because I'd built systems like this before and I'd sat in the car. Take either one away and the loop breaks.

That's the seat AI can't fill. Every other seat is now available.

What This Means in Practice

- The roles don't change. Who fills them does. Start with AI agents and grow into a human team as the problem scales — or bring AI into an existing team to operate at a level you couldn't staff. The structure is the same either way.

- Scope the problem like you'd scope a sprint. The clarity you'd give a human engineer — here's the role, here's what done looks like, here's what you're not responsible for — is exactly what an AI agent needs. Vague prompts produce vague results for the same reason vague tickets do.

- Your domain knowledge is the leverage. AI can staff every technical role. What it can't replace is the person who knows whether the output is right. The more experience you bring as the pilot, the more ambitious the problems you can take on — whether you're a solo developer, a startup, or a company building an AI team at scale.