Image: My dad's office, where my love of tools began, an old airplane hangar full of drill bits, lathes, scrap metal, and endless inspiration

Our Species' Next Abstraction of Capability

Making tools for our Tool to make itself more tools...

Intro

Let’s talk about tools.

This essay is technically about “AI”. But it’s really about how that two-letter monolith defining the current zeitgeist implies so much that it now means so little. Therefore, except for quoting other speakers, that’s the first and last time I’ll use the acronym here.

Whether you’re fascinated by intellectual abstractions, evolutionary history, management science, or good ol’ computers, I wrote this for you. And if you’re an artist innovating on the other side of the coin from science, then this was especially written for you. Because if the earth is to see another da Vinci, I believe they will be found creating within this new paradigm.

If you’re feeling cynical about these tools because you don’t “fully” understand them — don’t worry, no one person does completely… but we can all learn together. The fear-mongering is loud, and frankly the cacophony of bullshit is even louder, but the principles are clear. And maybe more importantly: they’re old. We are not experiencing some alien disruption to humanity’s story. We are doing what we’ve always done: build tools.

Thesis

The paradigm shift is that now our tools can build themselves more tools in ever deepening abstraction… Otherwise, I argue throughout this essay that actually the conceptual frameworks remain much the same, only that we can evolve our application of them.

Most of humanity’s greatest achievements have come through our species-defining capability to self-organize in complex ways using ever-evolving tools. This has not changed. What has changed is our invention of new tooling that now abstracts that capability with the immense leverage of digitization. These tools are still nowhere yet near the intelligence per watt efficiency of our own brains…1pmc.ncbi.nlm.nih.gov/articles/PMC10629395 But we don’t need to birth an Einstein or create a god to develop real powers “indistinguishable from magic”2As the prophetic writer Arthur C. Clarke coined: “Any sufficiently advanced technology is indistinguishable from magic.”. How?

I propose that the synthesis of computer science and management science will cultivate the most transformational innovations of our next decade.3I expect one day a new interdisciplinary term for this synthesis will arise.

As a second consequence, we’ll soon experience an intensely accelerating shift in capital reallocated from human labor to digital labor, and therefore to the shareholders of that digital labor. However you feel about that statement, don’t avoid or negate it — do something about it. We’ll come back to this at the end of the essay.

Part I: Tools Evolve, Abstractions Remain

Our frameworks of decomposing complexity are the same, while the tools have changed.

Doing what we already do best

Early in my career I worked at Flexport for a wildly ambitious founder who was given a few billion dollars to go build a trillion-dollar company.4Funny how 7 years later those numbers simply provoke a relative “Meh?” And frankly, nothing short of that goal would suffice.

Nobody would say that one founder alone would be capable of such a gargantuan task. And certainly no one would say any LLM or agent or what have you today could single-handedly achieve such a task…

So what did the founder do? Well, he hired a few people to do some tasks along with him and equipped them with the tools to operate a business. Then they all hired even more people to do even more tasks, and so on… the normal, boring story of building a large company. We do it all the time. There are for-profit companies that employ over 1 million humans in a pyramidal structure. And non-profits, and governments, and social clubs, whatever collection of humans we like!

Okay so now we have organized hundreds, thousands, millions of humans into these large social networks we call organizations. That collective strength is built on what many anthropologists consider one of our most defining traits: our capability for social coordination. We may not be ants but we certainly can self-organize impressively!

We primarily achieve this mind-blowing scale and complexity of coordination through another of our most defining species traits: language. We use language to communicate with each other, to develop our ideas, and interestingly enough to develop further language. Language is a conceptual tool — maybe our most consequential “tool” ever — for developing thought, for communicating that thought, and for building upon previous thought into further abstractions of thought. Hmm, that sounds analogous to these newfangled language engines we’ve developed…

“Memory”; or, Knowledge-as-Code (KaC)

Those thoughts crystallize into memories and their visceral importance to us at the most innate depths feels precious. So I respect the fears of some when they say, “These tools will erode our memory... We’ll forget how to think deeply…”.

But let’s remember: Thousands of years ago, oral storytelling was our only knowledge transfer system. Elders memorized epic poems, genealogies, and survival techniques. Then we invented writing... then books.... then the printing press... then Google search… then the Kindle... Each time someone warned: “This will erode our precious memory. People will forget how to truly know things.” No, we evolved as we evolved our tooling.

Yes, we offloaded more memory to books, but never all! Books didn’t erase who we are — rather they hardened memory. They let us build on knowledge across generations instead of restarting every time the village historian died. We still tell stories. And we also write them down.

Ironically, I always have in mind the quote from David Allen, creator of Getting Things Done: “The mind is for having ideas, not for holding them.”5David Allen on the mind and ideas.

youtube.com/watch?v=nCHd24Gi-G4 Or as the Chinese proverb frames it: “The dullest pencil is better than the sharpest memory,” so personally I always keep a notebook and pen on me.

Our brains are fantastic creative engines, so let’s unleash them from the drudgery of, say, indexing entire dictionaries and instead let the tool we invented (computers) do that much, much better than we biological analog beings ever could.

Now we’re abstracting again, and with far greater leverage than we ever had before. In fact, inspired by “infrastructure-as-code” products, I now propose a new term of knowledge-as-code (KaC) systems. It’s mostly “just” markdown files. Text files that agents interpret and act on. Hmm, sounds a lot like your company’s internal knowledge base6If Confluence, my condolences... or Google Drive folders or even your grandparent’s file cabinets of manila folders!

The difference now is we’ve combined the indexing capabilities of computer databases with the interpretive capabilities of humans into pure software.

Knowledge-as-Code is knowledge encoded in formats that are: (a) version-controlled, (b) human-readable, (c) agent-interpretable, and (d) composable into larger systems. Subsequently, on top of all those basic markdown files, people will build complex systems to manage these new mini-libraries of Alexandria.7One nifty example I’ve begun using recently is Eddie Landesberg’s knowledgevault.

pypi.org/project/knowledgevault Though you can start small and simple today, as the industry thought leader Allie Miller made accessible via her Context Vault methodology.8alliekmiller.com/ai-context-vault

To be clear, I do think we have been eroding our memory recently, but that’s not because we’re storing ideas on transistors — it’s because we’re endlessly scrolling through flickers of pixels of those fragmented ideas non-stop… Let’s be careful which tools we vilify and which we conflate.

Okay, so returning to the parable of Flexport: In these large organizations filled with tasks and goals, what do all these humans do? Imagine that pyramid of nodes in a network aiming towards one “mission”. Well they use their most important asset of all — their brains — to create and complete tasks towards that goal. And they often “upgrade” that asset through different external means (aka your Learning & Development budget) What happens when we can scale that even further?

Tools on tools

Over the last 3.3 million years our ancestors’ brains tripled in size. Many evolutionary biologists argue this is directly related to our tool use9For some validating evidence, read Erin E. Hecht’s 2023 research that “the acquisition of one technical skill can produce structural brain changes conducive to the discovery and acquisition of additional skills, providing empirical evidence for bio-cultural feedback loops long hypothesized to link learning and adaptive change.”

nature.com/articles/s41598-023-29994-y. In using tools we created a positive feedback loop to stimulate further growth.

Now this theory will likely never be decided conclusively, but what is conclusive? Over the last 10,000 years, our brains have not physically evolved as significantly as they did over those previous 3 million years. But what has certainly accelerated much, much faster is our advancement of tools. Our tools have skyrocketed. Literally — we have built rockets to the moon, and beyond10Folks don’t talk enough about Voyager 1 and Voyager 2! Insane feats of engineering, including 2024’s reprogramming over 15 billion miles away…

science.nasa.gov/mission/voyager/where-are-voyager-1-and-voyager-2-now, using tools that were built by other tools, built by other tools, and so on. We never could have built a rocket with simply obsidian hammers but using those obsidian hammers we crafted wheels which carried carts… on and on, for hundreds of thousands of years, as we engineered newer, more complex, more powerful inventions on top of the previous ones, creating novel capabilities.

This is our intellectual evolution.

Stone tools, writing, the printing press, electricity, software, large language models. The gaps between each accelerating shorter and shorter, millions of years, then thousands, then centuries, decades, mere years.

One of the most beautiful abstractions of tools is that I do not need to understand how a tool was built, or even how the tools that built it were built, in order to use it.

(Hmm, sounds a lot like modern software, doesn’t it? How many readers of this essay can write Assembly code?11Don’t worry, as Steve Yegge recounted this week to Gergely Orosz, “Anybody would be stupid to say you’re not a good engineer if you can’t write assembly language today.”

youtube.com/watch?v=aFsAOu2bgFk No, be honest. Sure, I took an Assembly class during my engineering undergrad. I remember zero of it, yet I still use its capabilities all day every day.)

We drive cars constantly and 99% of car drivers, including myself, could not tell you how the robots in a car factory operate. That’s fantastic! Because this creates a wonderfully useful and empowering abstraction of tool capability.

Flexport’s founder12Ryan Petersen was a great boss to work for. Don’t underestimate following a leader you actually respect. would often recount the famous trope that no one person today could tell you exactly how a pencil is made. There are way, way too many different humans with different specialties and different equipment to manufacture the graphite in the core, to chop down the wood for the shaft, to drive the trucks to the factories. This is how our vastly complex global species lives and — very importantly — thrives.

My friend John Cowgill recently reminded me of Steve Jobs’ famous “bicycle for the mind” quote, which got me researching the landmark 1973 Scientific American article13scientificamerican.com/article/bicycle-technology that Jobs was referencing. Bicycles made humans 5x more energy efficient at locomotion, far more efficient than most other animals. We used a tool to upgrade our physical capabilities. Inspired by this, Jobs realized this could be the intelligence amplification via tooling that they were building with the Macintosh. Judging by the last four decades, he was quite right!

Three timeless abstractions

We’ve found this amplification so useful we constantly work on it. We invented telephony to amplify our language across continents. Then we invented fiber optic cables to be able to do it even more quickly. Rinse and repeat.

So what happens when we put it all together? We have a network of human worker nodes using tools to accomplish tasks in coordination towards a set of goals. An organizational abstraction of capability.

And those human workers have specialties. You have your online marketing expert and your head of logistics and your highly experienced sales rep. You might even call it a sort of “mixture of experts” within your organization. (We’ll come back to this term later.)

By now we’ve highlighted three key abstractions of capability, progressively building on themselves:

- The organizational abstraction to effectively extend one human’s ideas through the means of many humans.

- The abstraction of human capability via tools.

- The abstraction of tool capability by using tools to create further tools of complexity, enabling exponential leverage.

So we have humans extending further humans, tools amplifying humans, and tools upgrading tools.

The most successful organizations — of any type — leverage all three of these principles deeply, even if often unwittingly. Beyond using them individually, we weave them together. A team of humans slack each other to figure out how to combine their individual scripts of software into one cohesively powerful piece of software, etc.

Management science (aka species self-awareness)

Now following our historical proclivity for learning and self-reflection, we’ve packaged all these conceptual frameworks over the last century into a science of how to manage such collections of humans. Fittingly for this essay, my favorite class in university happened to be IEMS 342 Organizational Behavior14Taught by the fantastic Professor Bill White.

williamjwhite.com, which taught these fundamental learnings and frameworks on management science — for corporations, for schoolyard dodgeball teams, for a small family, for any human interacting with other humans.

As part of these schools of management science, psychology, sociology, and even economics, we also train those (human) nodes in our vast organization to make themselves individually more capable, not just with external tools to accomplish tasks but to upgrade their internal “tool”, their brain. And beyond doing this intelligence upgrade on the individual level person-by-person, organizations also do this on a broader scale by the selection and retention of workers.

Utterly impressive when successful organizations scale this practice to millions of people. Now imagine you could apply that scale, and more, with the inorganic leverage of digitization…

Proposing an evolutionary lineage of information

How? Well, we’ve invented a new way of combining those 3 abstractions, which we’ll return to later in this essay.

First, let me propose a novel lineage of abstraction (along with my incredibly over-simplified description of what makes each layer possible):

↓

Transistors (physics — controlling electrons!)

↓

Computers (electrical engineering)

↓

Software (“traditional” computer science)

↓

LLMs (statistics + entire corpus of the internet + data centers)

↓

Leadership (propagating values at scale)

That last one may surprise you. But soon LLMs will just be the next expected abstraction of “software” — already increasingly called agentic engineering. And then what? How do you manage all those modular agents... and all the humans working alongside them? By being a great leader15I owe most of my experiential foundation in leadership to my Northwestern mentor and friend, Professor Adam Goodman.

lead.northwestern.edu.

Sure, we’ll need to update the curriculum in my alma mater’s Organizational Behavior class, but so did they after the iPhone became ubiquitous and indispensable. The tools evolve, new abstractions emerge, while frameworks remain.

As the famed researcher and OpenAI-cofounder Andrej Karpathy put it: “I do see AI as fundamentally an extension of computing in some pretty fundamental way. I feel like I see a continuum of this kind of recursive self-improvement... a progression of automation in society, right? Extrapolating the trend of computing.”16Dwarkesh Patel podcast with Andrej Karpathy.

dwarkesh.com/p/andrej-karpathy

So let’s keep extrapolating and see where that leads…

These three abstractions — organizational, tool capability, and human amplification — aren’t just academic curiosities. The firms that purposefully build on these principles will wield immense leverage. Let’s discuss the clearest example.

Part II: From Stone to Silicon and Back Again, an Anthropic’s Tale…17Yes, this is a title mashup inspired by the luminaries Bill Inmon and J.R.R. Tolkien.

I have never worked at Anthropic and so I do not know if this is actually their product philosophy. But it seems so. To be clear, though many organizations are now embodying these ideas, I consider Anthropic often the first to deeply prove them.

Creating a new evolutionary branch

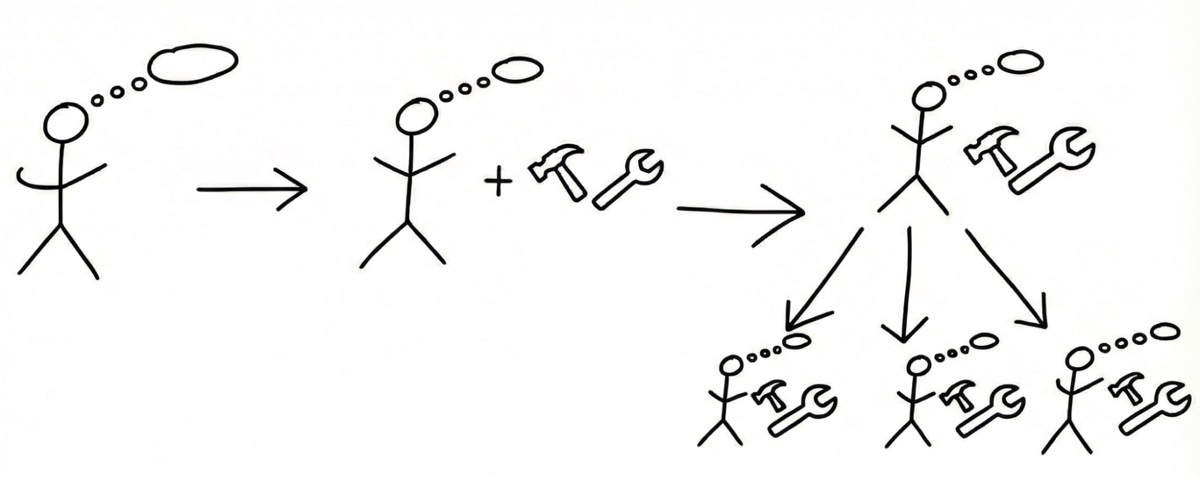

Recall that pyramidal organization from Part I: thousands of humans coordinating as hierarchical nodes. Frontier labs are building the same architecture, but now some nodes are silicon “subagents”. The organizational principles remain: clear boundaries, defined roles, tool equipment, goal setting, resource allocation. Only now we can work at gigahertz speeds.

Increasingly I see Anthropic evolving their offerings by taking their name to heart, learning from our human evolutionary history and building on the core components discussed in Part I.

And not just their company name, but also of course their flagship product: Claude. It’s rare to be able to reasonably claim one person single-handedly18I recognize he benefited deeply from his Bell Labs colleagues, but he mostly ran solo. changed the fate of the world (or at least got us all to the next stage much sooner than we would’ve without him). Claude Shannon, the inventor of Information Theory, deserves this credit, so I’m grateful Anthropic chose to celebrate him19Also notable that they chose a male name after most other digital “assistants” have female names, which sadly I don’t believe is an accident but an artifact of cultural gender bias., as not enough people know his name!

Industry leader and head of Microsoft AI, Mustafa Suleyman, controversially suggested that we’re creating a new “digital species”. Rather than debate the validity of literal speciation or not20Which the final section of the essay touches on., I think the analogy is most instructive. Certainly we should treat it differently — this tool exists in silicon, we do not — and not demand human-esque “protections” for what is simply software. But instead let’s remember that we are very purposefully evolving a knowledge-based, tool-using, instruction-following, coordination-capable “thing” explicitly fed on our own human learnings. Therefore, maybe we can learn from our own history and thoughtfully translate it to our new situation.

Before we get too far out, let’s talk tangibly.

This all started over many decades with previous researchers teaching computers language. Now, Anthropic seems to be the leading lab in teaching those language models to use tools, just as we learned as a species over the last few hundred thousand years. Everyone’s obsessing over model parameters and benchmark scores. Meanwhile, Anthropic is doing something far more interesting: building tools for their Tool to build itself more tools... in an ever accelerating loop.

Is any frontier lab out there building tools — I don’t mean the “brain” itself (aka today’s LLMs), but tools for the brain — like Anthropic is?

This is why Claude Code becomes an incredibly elegant synthesis of extending the “brain”21I bet someone at Anthropic read Annie Murphy Paul’s The Extended Mind.

anniemurphypaul.com with more and more “tools” as simply one cohesive piece of software because it’s all just code.22Thank you to Matt Cheung for pinpointing to me this corporate philosophy from a recent Anthropic event. Worth noting that although very similar under the hood, I consider this ethos distinct from OpenAI’s visual builder AgentKit release.23OpenAI: Introducing AgentKit.

openai.com/index/introducing-agentkit Not inherently wrong (CLI can get tiring sometimes), BUT I’ll wager that the long-term product development philosophies may diverge and lead to different results…

Okay, cool idea, but how to put it into useful practice? Like with anything we want to practice, learn the skill.

Anthropic Skills: deterministic + probabilistic

Until 2022, software was deterministic: If X, then Y. Now we have “stochastic software” — still starts as just 1s and 0s on a chip, but each processing instance (aka inference) exhibits chance from playing within distributions: If X, then Why...?

Both types can be fantastically useful (humans are the most infamously non-deterministic machines!), and even more so when smartly mixed together. The next critical evolution of modern software will be engineering thoughtfully composed architectures of deterministic code + probabilistic code.

Our evolutionary trajectory of digital capability has been:

- First we were simply probabilistic humans.

- Then we were probabilistic humans with deterministic code.

- Recently we became probabilistic humans with deterministic code and with probabilistic code.

- Now we can use both deterministic code and probabilistic code… AND that same probabilistic code can itself use further deterministic code and probabilistic code, and on and on.

Humans… Deterministic tools… Probabilistic tools… Humans…. De…

Are you thinking what I’m thinking? An all-time visionary’s iconic product launch…

Steve: “Are you getting it??”24Watch the iconic launch.

youtube.com/watch?v=MnrJzXM7a6o

Do you see now where this far-looping abstraction of tooling is going? It’s wildly exciting.

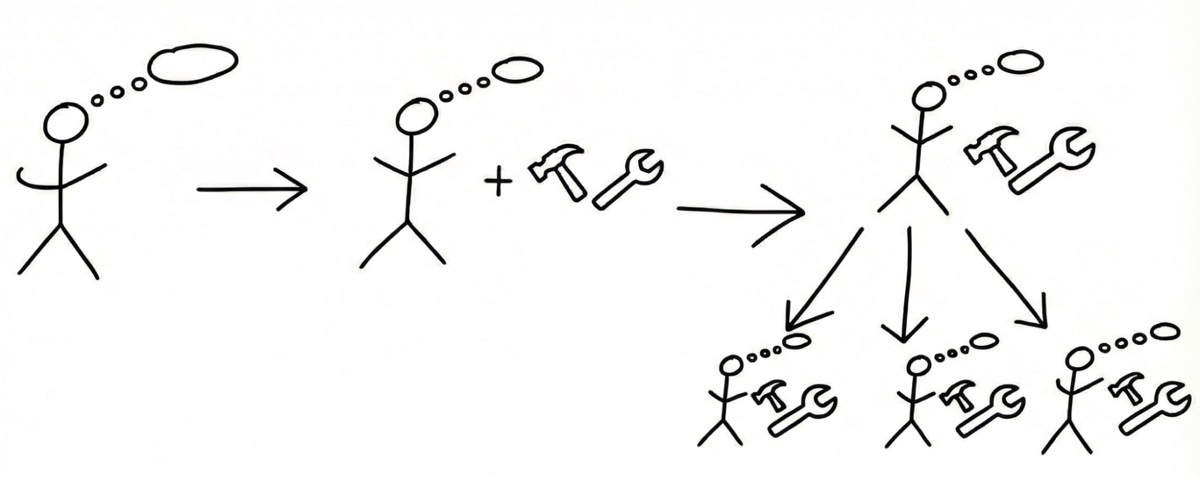

It’s exciting and completely analogous to what organizations do today with (solely) humans. Humans use a variety of other humans and a variety of tools to accomplish tasks. But now our abstraction of task management has a new depth, breadth, and speed that only massive clusters of data center GPUs could provide. So back to our company example, we’ve been operating like this:

And now those tools can themselves propagate at scale into further workers and tools:

Credit: Author + Gemini Nano Banana, styled in homage to XKCD

The pioneering organizations of the future will not consist of, say, 1000 humans working together. Nor will they consist of 1 person with 1000 agents. The successful orgs will be 1000’s of humans each with 1000’s of agents. Or whatever size you prefer (~Dunbar’s number feels compelling to me personally).

Alright, but how to put this into action?

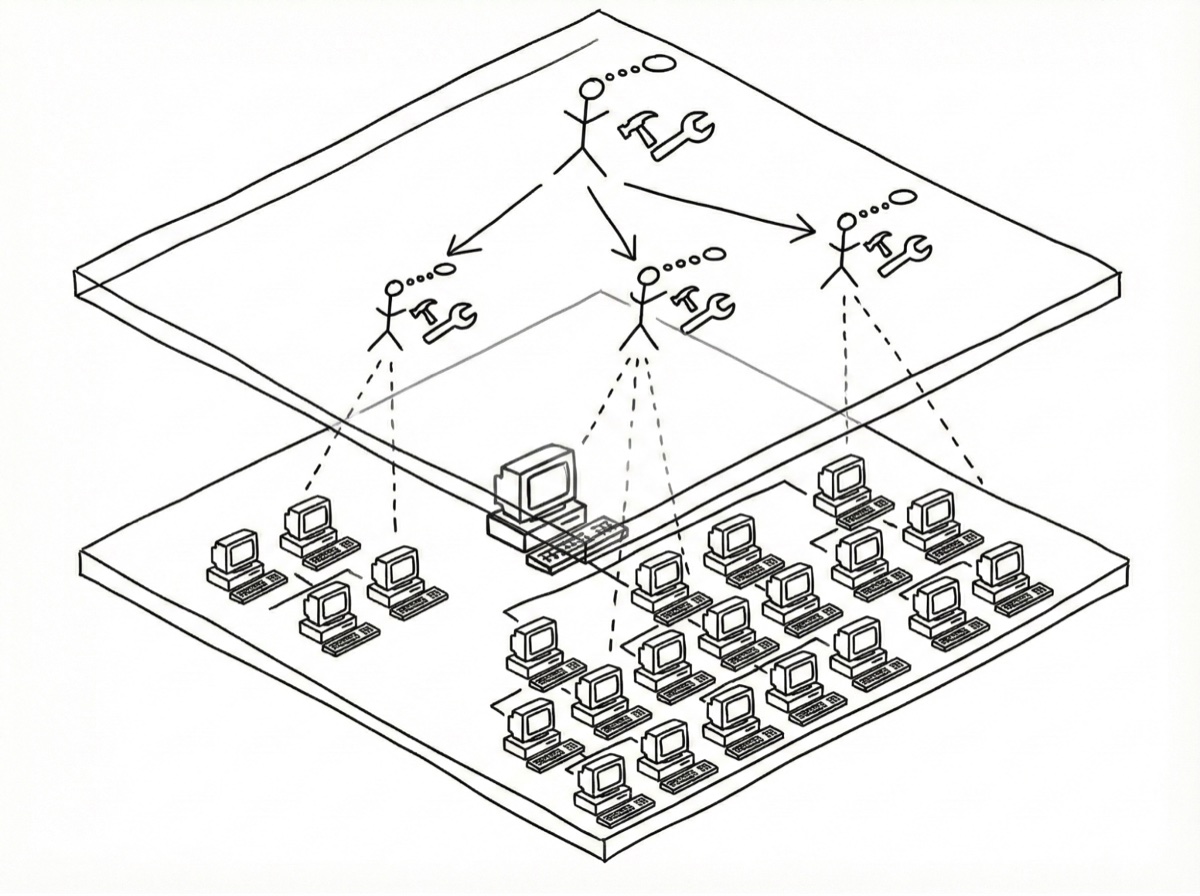

Anthropic’s release of Skills, which are technically pedestrian by traditional software standards, but are conceptually brilliant, cements this framework. Typical of their style, Anthropic was very lowkey about this new feature. Regardless, Simon Willison thinks Skills might become much bigger than their conversation-dominating older sibling of MCPs because of this thoughtful, extendable, powerful composition. And now all of the industry has adopted it as an open standard, just as they did with MCPs.

Nonetheless, it’s important to always remain lucidly critical of herd mentality. One of the most agentically ambitious software engineers out there today, Geoffrey Huntley, called out GitHub’s ubiquitous MCP for using ~25% of an average LLM’s entire context window just to load the tool!25ghuntley.com/allocations

In Skills, Anthropic’s design of progressive context disclosure is a clever method to reduce against that wasted token burn:

- Level 1: Always loads 4 lines of YAML (deterministic metadata)

- Level 2: Model (probabilistically) decides relevance; if relevant, loads full skill

- Level 3: When (probabilistically) deemed necessary, loads further instructions (probabilistic) and scripts (deterministic)

Source: Anthropic Engineering26Anthropic: Equipping Agents for the Real World with Agent Skills.

anthropic.com/engineering/equipping-agents-for-the-real-world-with-agent-skills

At this point, you might be thinking: wait, this whole conceptual framework of how to direct probabilistic workers to use deterministic code is, like… what running a company is already? Or sounds like the jargon of “human-in-the-loop”? Exactly! This is nothing wholly new! Where you were previously managing your employee Cameron’s output, now it may be Cameron’s and Claude’s that you need to manage. Same same.

Consider some of Anthropic’s greatest industry firsts:

- Model Context Protocol (MCP)27The open standard for connecting LLMs to external tools and data sources.

modelcontextprotocol.io - Skills (and then Plugins for shareability)

- Claude Code (adios IDEs!)

Each one isn’t just a feature — it’s a tool-building tool. MCPs enable LLMs to use any arbitrary software tool or dataset as an extended tool. And as the industry expert and my favorite technical writer Simon Willison28Seriously, go read all of his blog. It’s a goldmine of highly practical, openly critical, open-source, highly experienced wisdom, with a refreshing touch of levity (and pelicans!). I owe much of my agentic engineering knowledge to him!

simonwillison.net points out about Skills, it’s purposefully simple — an elegance Steve Jobs himself would appreciate. But start imagining the abstractions possible as you layer tools on tools. Same ol’ systems thinking and engineering, now with newer tech.

This is acceleration in action: not just tools (velocity) but tools making tools (acceleration) making tools (compounding acceleration29As Lizzie Baetz reminded me, and you physics nerds will know, it’s all about jerk.).

Wait. Stop reading, and go build something30Then please return and finish reading :)

Before I continue on with the future-looking evolutionary analogies, I need to invoke my other favorite thinker out there right now, Wharton professor Ethan Mollick, because of his down-to-earth, balanced, reasonable takes on the crazy hurricane of opinions out there: “Even if AI development stopped today, we would have years of change ahead of us integrating these systems into our world.”31Mollick: “The Present Future” — published November 4, 2024.

oneusefulthing.org/p/the-present-future-ais-impact-long

I agree! And guess what? He published that on November 4… 2024! 3 months before even Sonnet 3.7 released… which now feels archaic in capability to its Opus 4.6 sibling.

So if you roll your eyes at Altman’s trillion dollar infra plans32Or are more worried about the circular financing bubble brewing…

wsj.com/tech/ai/is-the-flurry-of-circular-ai-deals-a-win-winor-sign-of-a-bubble-8a2d70c5 or Zuckerberg’s Metaverse or Kurzweil’s “Singularity”, that’s totally fine (probably reasonable of you!). Everyone’s opinions about the future are speculative.

But what’s already possible with our current tools is real. So forget the hype — please ignore it for the rest of this essay — and just start building!

[I’ll wait for you to go and prototype something. Anything! Just give it a go.]

We social beings stick together

…Okay, good to have you back. What’d you build??

You (hopefully) just built something. Well not just you but you and your gremlin. Yes, I actually call all my little Claude/Gemini/et. al. helpers “gremlins”33So it was a pleasure to read Simon’s “research goblin” article and think, ah, may all our gremlins and goblins work together one day peacefully!

simonwillison.net/2025/Sep/6/research-goblin. It started as a silly joke, but it’s grown on me. Because it’s certainly evolved past Microsoft’s Clippy but not (yet) some sci-fi deity, so gremlin fits.

In 1984 Steve Jobs predicted34Any excuse to rewatch that ad is worth it!

youtube.com/watch?v=VtvjbmoDx-I that computers would contain these gremlins by the early 90’s — “as if there’s a little person inside that box who starts to anticipate what you want... like you have a little friend.”35Steve Jobs 1984 Access Magazine interview.

thedailybeast.com/steve-jobs-1984-access-magazine-interview

Forty years later, he wasn’t entirely wrong. But the real breakthrough wasn’t making the computer more like a person. It was making the computer a better tool for the person. The bicycle for the mind. The amplifier of human capability.

So what’s going to come next? Sociological capabilities. Coordination. Orchestration. Good old fashioned teamwork. We’ve taught teamwork to each other for millennia. Now the physics has changed a bit, but the game remains.

We’re beginning to see some great attempts at this agent coordination from notable creators:

- Anthropic’s native subagents and agent teams are designed to smoothly, autonomously call each other.36Claude Code sub-agents documentation.

code.claude.com/docs/en/sub-agents - Cursor’s experimental long-running agents are building their own coordination infra.37cursor.com/blog/self-driving-codebases

- Linear has built native integrations to task 3rd-party agents (e.g. Cursor, ChatPRD) with tasks like any other human.38linear.app/integrations/agents

- Steve Yegge built Beads purely for agents to task manage themselves39steve-yegge.medium.com/beads-blows-up-a0a61bb889b4. Then Gas Town to give them a factory. And now Wasteland to… create decentralized conglomerates?

- Airflow and Superset creator, Maxime Beauchemin, built Agor for humans to orchestrate coding agents.40Agor: orchestrate coding agents.

agor.live

But this is just the beginning… keep reading to get a sneak peek at how we’re going much further at Snowpack Data.

Individual Genius < Mixture of Experts… < Mixture of Geniuses

Late last year, Andrej Karpathy spoke about the limitations of “agents”, saying it’s not the year of agents but the decade of agents because they don’t yet have enough intelligence.41Then ~2 months later he shortened that timeline, by a lot. Things move really really fast these days!

dwarkesh.com/p/andrej-karpathy

Yann LeCun (one of the acclaimed “godfathers of AI”) also infamously stated he’s not interested in LLMs anymore, thinking they’re reaching their capacity of intelligence.42youtube.com/watch?v=eyrDM3A_YFc

I trust both of their domain expertises.

And yet, here’s what I further propose: rather than the “god in a box” approach that Karpathy poignantly referred to, what if we refocused on “tools in a box”? At a lower scale — not as some techno-mythical being above us, but as digital tools that we’re creating and can modularly deploy. Any digital brain is still ones and zeros stored on transistors, whether LLM, “world model”, or a simple random forest algorithm. Because even if the individual “intelligence” of one model remains X, what happens when you give that 1 X the most advanced tools out there, and then what happens when you coordinate 100 of those X’s?

Because even DeepSeek managed to crash the global stock market for a day when they most notably proved that Mixture of Experts (MOE) is already more powerful than one expert. And that’s what this is about — coordinating those experts together. This is why Anthropic co-founder/CEO Dario Amodei talks often about a “country of 100 million geniuses” in a data center.43darioamodei.com/essay/machines-of-loving-grace

More than a decade before Amodei’s essay, researchers from MIT and Carnegie Mellon published in Science their own substantiation of this factor: collective intelligence “is not strongly correlated with the average or maximum individual intelligence of group members but is correlated with the average social sensitivity of group members, the equality in distribution of conversational turn-taking.”44Evidence for a Collective Intelligence Factor in the Performance of Human Groups.

science.org/doi/10.1126/science.1193147 Coordination bests individual genius.

Regardless of whether models plateau or evolve rapidly, what matters even more is the tools we give them and how we orchestrate them together. That might be the greater unlock that many are underestimating — clearly Anthropic gets it! Claude Code quickly became the industry leader not because Opus 4.6 is smarter than GPT 5.4 but because of the tool harness they’ve built for it, as compared to Codex’s or Copilot’s (o poor, disowned Copilot…45Microsoft’s Claude Code partnership.

theverge.com/tech/865689/microsoft-claude-code-anthropic-partnership-notepad).

Further proof? Cursor, often considered the 2nd best coding agent out there today, is not “just” a router of other labs’ models, but a brilliantly built harness (aka toolkit) on top of those models. They deserve a lot of credit for exploding onto the scene with many firsts (as well as I often see them adopting Anthropic’s ideas a few months later: plugins, subagents, etc.)

Constitutional AI: values for self-organization

Just as human organizations evolved from arbitrary tribal customs to constitutional democracies, our systems of agents need guiding principles. Anthropic’s Constitutional AI46anthropic.com/news/claudes-constitution mirrors this progression — creating clear frameworks that allow autonomous action within defined boundaries.

This is what enables the next leap: organizations designing themselves. May sound sci-fi when you think of computers, but we do this every day as humans in organizations.

Okay, now bear with me for a minute. Because you might not believe my next extension of this abstraction, but I say it because

- My first point of this whole essay was that learning from the last abstraction should inform the potentiality of the next

- We humans usually fail to understand the exponential, again and again47Directly inspired by Julian Schrittwieser’s humble, analytically-driven article on this topic.

julian.ac/blog/2025/09/27/failing-to-understand-the-exponential-again

I was awe-struck by this research from Altera (now Fundamental Research Labs48Ironic to me, they’ve pivoted from the most philosophically creative research to the most quotidian: Excel agents.), and I think it’s been underappreciated49Altera’s Minecraft agent research.

arxiv.org/abs/2411.00114: 1,000 Minecraft agents created roles, governance, and collaboration without micromanagement. One became the priest. Another became the top trader. These characters didn’t have supreme processing power, but instead a better architecture (Altera’s code) and a more conducive environment (Minecraft) for collaboration.

Just like societies self-organize within legal frameworks, digital minds can now self-organize within value frameworks. That’s the kind of societal lubrication Hammurabi understood was transformative.

In other words: the creation of, and adherence to, values — now partially programmable through evaluation frameworks (aka Evals) that make abstract judgments increasingly enforceable. Not “perfect”, yet neither is our society, in which we still manage to thrive.

Still feel hand-wavey? We already do this every day when subjectively hiring another human employee depending on whether they’re a “culture fit” or whatever other buzzword. These things are never perfect, but we keep applying our existing lessons. Plus Git-versioned code is a lot more robust and predictable (even if probabilistic) than whatever volatile mood your Head of Recruiting may be in on a given day.

Ethan Mollick’s been saying it: formal organizational structures unlock performance exponentially. In traditional management science, that sounds dumb obvious. But he’s actually talking about the new frontier of orgs interwoven with human and computer workers.

Because if Minecraft agents can figure out taxation policy and religious evangelism50Fun fact: the Minecraft priest didn’t seem to get Martin Luther’s memo as it bribed its way to success! through emergent organization, maybe our teams (humans and/or computers) deserve better structural support than “just communicate better.”

Evolutionary Parallels

So we’ve seen how Anthropic’s products embody our three abstractions: organizational (agent coordination), tool capability (building skills), and human amplification (Claude Code). This is not a coincidence. The same feedback loop that turned stone-knapping into brain growth is now running in software. Each tool Anthropic builds makes Claude more capable of building the next tool. Each abstraction layer compounds the one beneath it.

Anyone can now apply them. What abstraction will you build?

Part III: So what’s next?

Ever since the advent of computers, there has been a gradual shift of capital from human labor to digital labor, which is now beginning to significantly accelerate51Please hold your economic reactions until the final essay section.. How much faster and to what proportion, no one knows… but the direction seems increasingly certain.

So, as Hall of Famer Wayne Gretzky advised, we will skate to where the puck is going.52Can’t wait until we start our intramural hockey team: Snowpuck Data

Asking great questions becomes the skill

The relative importance of being able to correctly answer a question versus asking a good question continues to tilt further towards Asking. Einstein tipped us off when he sagely reminded us that “imagination is more important than knowledge.” Google Search dramatically altered this balance when suddenly the world’s collective intelligence was indexed for anyone to find for free. And now you can converse with it, not just page-rank it. So from where will your advantage arise?

First, let’s return to the abstraction lineage proposed earlier:

Information Theory → Transistors → Computers → Software → LLMs → Leadership

Why leadership? Because once everyone has access to the same probabilistic tools, what differentiates isn’t the tools — it’s how you manage them. Just as the best CTOs today aren’t the best coders but the best orchestrators of talent, tomorrow’s leaders won’t prompt the best — they’ll design and manage the best collaboration systems.

Ethan Mollick nailed it: “Deeply understanding how you give people instructions & information they can act on.”53Mollick on LinkedIn.

linkedin.com/posts/emollick_all-the-technical-language-around-ai-obscures-activity-7344827485919846400-1BLA That’s leadership.

In fact, that quote was him speaking about how to best work with LLMs, but it applies equally to silicon and carbon teammates.

The strongest builders aren’t just coders anymore — they’re managing mixed teams of humans and agents, giving feedback to both, designing systems where both thrive. Same methodology as today’s CTO, but with exponentially greater leverage.

Jensen Huang recently said if he were in school now, he’d focus on the physical sciences.54Jensen Huang on studying physical sciences.

cnbc.com/2025/07/18/nvidia-ceo-jensen-huang-study-field-computer-science-software-gpu-alexnet-generative-physical-ai-university.html A great recommendation and, I’d argue, very much the same pattern Mollick espouses. The scientific method trains you to form a hypothesis, decompose a problem, run experiments, and communicate findings clearly. Effective leadership — giving humans (and computers) instructions they can productively act on — practices the same underlying skills.

Great leaders ask great questions is such a data-backed, widely researched consensus of so many leadership studies that it feels cliche to write.55One of many affirming research reports.

hbr.org/2018/05/the-surprising-power-of-questions Yet cliches are cliche for a reason.

This definition is not “technical” at all. It sounds like being a good communicator... like being empathetic... like being a thoughtful manager and friend. Which means there’s never been a greater opportunity for new talent — the fastest, smartest learners of abstraction — to break into “Tech”, or to appropriate and use these tools anywhere else. Those artists described in the Intro? Find where you can create the most value in this lineage of capability and jump in. Don’t wait.

The future of knowledge work

Returning to the original thesis, what does the merger of computer science and management science actually entail? How novel is it really…? Because it sounds quite similar to the well-tread thesis of good ol’ 20th century computer science: designing innovative human-tool collaboration systems… so, computers?

Essentially. This is not wholly new. We’ve been gradually moving along this spectrum ever since we started encoding information into digital technologies. But we’ve crossed one critical inflection: one of our tools is now more worker than “just” a tool. And these new “workers” can be instantly created at a mindblowing scale. But scale only matters if it can be harnessed.

Think back to my initial Flexport example — thousands of humans in a pyramidal org, each with their slice of the mission to accomplish. What made it possible was coordination: the right work assigned to the right person with the right tools at the right time. That problem hasn’t changed. What has changed is the hybridization of workers. Therefore, so should the software to coordinate humans and computers together.

The future of frontier software for consulting firms, professional services, and likely all knowledge work is going to evolve rapidly and drastically. The bifurcation of firms that don’t adapt v. do adapt will sharpen quickly.

Creating Leverage

At Snowpack Data, we already practice and build inside this framework. And we get the pleasure of dogfooding it every day in our data consulting services. Since the beginning, we’ve built our own proprietary backend system for the consulting business: Cronos56cronosplatform.com. As we’ve grown, matured, and ideated on new features for how to streamline our professional services, we apply our software chops to solving that problem for tomorrow (and for future customer firms of Cronos). Currently, Cronos is the backbone of timesheets, invoicing, billing, staffing, scenario planning, accounting, and financial planning. And that’s just the beginning.

If Cronos is the backend of knowledge services, then now we’re building the frontend… which increasingly accelerates towards the thesis of this essay. Why? Because a business (or any organization) has always been predicated on creating leverage. Management science is sociological leverage. Computer science is digital leverage. Smartly combine them and even Archimedes would be jealous…

But a single huge lever, as Archimedes envisioned to move the Earth, is too crude a tool for all the complexity of business. We need a more granular precision of capabilities. Our daily work confronts us with this decomposition problem: which abstraction of which task, assigned to which worker, equipped with which tools, executed at which time? We are data nerds by nature, but we think from business outcomes first, always working top-down from the goal. The balance between those two is different for every client, including ourselves.

This practice has led us to build Tessellate — the operating platform for modern knowledge teams, where every task teaches the system how to work better. We’re starting with what we know best — Data consulting — but the underlying problem is universal: any organization doing knowledge work faces the same challenge of coordinating humans and computers toward a goal.

What makes Tessellate different is that the intelligence lives in the management layer, not the model. This is not self-learning software. It is a self-learning organization system. Humans have always built processes that improve themselves (e.g. Six Sigma, Agile, DevOps). What is new is that the synthesis of management science and computer science now lets us do this with speed, scale, and leverage never before possible. Tasks generate signal. That signal reshapes how the next task gets assigned, abstracted, and executed. We are building the management system for modern teams that learns from itself, and we are building it by using it.

If that sounds like something you want to build with us, we would love to hear from you.

Therefore, our strategy is to let the entire industry keep working from the bottom-up as we work from the top-down. Everyone is racing to build more and more powerful models. Meanwhile those same labs and other shops are building more powerful agents (essentially, models + tools). Now folks are building fantastic orchestrators of those agents. We do not want to compete with any of that — let the experts cook!

Instead we’re simply going to ride their waves to where our expertise gives us the most leverage.

Choose your lever

My dad Bob Olodort was a lifelong inventor and never met a tool he didn’t like — he built and unbuilt things since he was a toddler. Yet he never once formally studied engineering. In fact he originally studied film. I wish I had asked him if this was his purposeful ethos or if he unconsciously practiced it, but regardless he transformed his creative passion into technical capability. He may have had almost every mechanical tool known to humankind in his wonderful mess of an office, but he never had the tools of abstraction we have now. What abilities would he have honed? We’ll never know, but you can give ’em a try!

Remember that lineage from Part I? You get to choose where you work along it.

Love writing emails because the practice makes you a better thinker? Then write them. Love your four-hour slide deck process because it stimulates deep thinking? Then keep doing it. You’re not “falling behind” — you’re choosing where on the abstraction chain you want to work. And you still keep thinking. Depending on your role, your goals, and your situation, many options can be reasonable. Of course, you don’t see any accountants today using an abacus, so do be purposeful and mindful…

People who love to write won’t stop writing. You just have more opportunities for where to focus your energy. In fact, I find I’m writing even more these days because of the latent inspiration I can access.

Some people still write assembly code. Some write C. Some write Python. Some use no-code tools. And already, some use natural language to build complex systems. All of these are valid choices for different people in different contexts with different goals.

I think so often about Simon Willison’s eloquent encapsulation of the new choice we’re all presented: “Quitting programming as a career right now because of LLMs would be like quitting carpentry as a career thanks to the invention of the table saw.”57simonwillison.net/2025/Jul/3/table-saws

This might feel very empowering. Or you might honestly be in mourning of your past craft, as the highly pragmatic and thoughtful software engineering writer Gergely Orosz recently shared58blog.pragmaticengineer.com/the-grief-when-ai-writes-most-of-the-code. Probably a whole mix of emotions!

Outro: We (still) make the choices.

Capitalism, etc…

Okay, maybe by now you read me as some industry fanboy… I fully recognize that most companies today promote similar words of tech optimism in pursuit of their own greater profits. But just because a message can be co-opted does not deny its original validity. So please bear with me.

I do not write this essay as some techno-optimist’s blind carte blanche approval of all digital efforts happening today. In fact, many of them I’d categorize as either exploitative or simply a waste of our precious resources and time.

Most immediately, I’m concerned about the further proliferation of ads59Most notably, first Amp Code and then ChatGPT, though it’s only a matter of time until Gemini too.. An advertisement superseding another algorithmically optimized result is inherently a degradation of intelligence. Otherwise, why would the advertiser pay money to compete with a free result? Maybe that’s “fine” in a Google Search list, but when we’re engaging with digital minds explicitly built for their actionable intelligence, that’s simply dilution. If you disagree I’d love to hear your counterargument…

Like our economy and lived experience needs more ads in it. Call it a personal aesthetic choice, but when will we evolve past this era?

Luckily, we have a choice. We actually can build “machines of loving grace”60darioamodei.com/essay/machines-of-loving-grace. You can build and use them towards positive progress. Sure, we’ll disagree on what’s “positive,” but that’s the timeless question of What values do we want?

This essay is meant to reset your views on these tools back to the metaphorical stem cell that they are, so that YOU can decide — must decide — what you want to do with them. Maybe you still hate them. Maybe you’re obsessed. Maybe conflicted. Maybe confused. Probably a messy mixture.

I regularly hear many dire concerns about these nascent tools that are quite reasonable, need to be discussed, and most of them addressed. But I’d argue that the vast majority of these are crucially not about the tool itself but how humans may use it.61Yes, yes, I know you’re already thinking about those other apocalyptic concerns. Next section!

Ages ago we invented hammers. Many humans built homes with them. Also, some humans bludgeoned others to death. Still, we keep manufacturing billions of hammers because they’re fantastically net-positive for us. Nuclear fission was a brilliant invention. With it, we built (using many hammers) nuclear reactors to cleanly62Yes, there’s radioactive waste, but again, wildly net-positive for us versus our currently dominant fossil fuels... power millions of homes. We also used it to create nuclear bombs, which are obscenely horrific and never should have been made!

This is the neutrality duality of tools.63For a broadly fascinating and brilliantly revelatory read on this topic, among many other things, I couldn’t more highly suggest David Graeber and David Wengrow’s The Dawn of Everything. Thank you, Adam Ashkenazi, for the rec and for the insistence on evaluating intent within tools.

dawnofeverything.industries

Rather than shift blame to the inanimate object, let’s focus responsibility on the humans creating and using them.

And sure, certain tools carry loaded64I always intend my puns. intent... A gun is “harmless” without someone to pull the trigger. But it is explicitly designed to kill and only to kill. Meanwhile, software as a conceptual tool can be inherently flexible and purposefully moldable. It’s within the intent of its design that preferences arise. This is one more critical reason to care about the values we instill into our new tooling. This is why Anthropic’s Constitutional AI is thoughtful and important. And why I’ll definitely never use Grok.

To critically understand these things, we must disentangle the tool from the intent behind it. That intent can be militaristic and nationalistic, like the nuclear bomb. Or it could be simply economic — today, capitalistic. And those intentions are important. But they actually precede the tool. These How’s — how to economically allocate resources, how to protect national security, etc. — are topics much too large, complicated, uncertain, and sufficiently divisive to distract from this simple essay on tools.65But if you want to talk privately, I’ll giddily converse for hours about economic systems, so reach out. Just remember…

Do not conflate the tool with the user. Do not conflate the tool with the system. Do not conflate tool X with tool Y with tool Z… there’s a panoply of different “tools”!

So maybe, given all that, you still don’t want to use these new tools for [insert reason]. That’s okay! We all need to think for ourselves. As my past manager Jake Peterson would retort: what are YOU going to do about it?

Don’t ever forget: we always, always have a choice.

My bingo card for 2026-2030

In roughly quasi-chronological order, but hey, I’m not the Oracle of Delphi…

- Tools keep improving. Increasingly people positively use them. Some, but fewer, still decry them.

- The market propaganda about “the imminent next wave” keeps propping up the market’s bull run.

- At some point(??), the intelligence of LLMs — rather than the tools or applications of them — hits diminishing returns* (à la LeCun’s, Karpathy’s, and others’ predictions).

- Our stock market and most underwater startups crash…. because Capitalism loves a good boom-and-bust!

- The “haters” laugh and jeer: “I told you so! No AGI, hah!”

- Those still flush with cash (patiently waiting Google, etc.) buy up OpenAI et. al. for pennies on the dollar.

- Meanwhile people keep using these wildly useful tools

- “Those still flush with cash” get even flusher…

- The relative flow of capital from human labor to digital labor continues forward, tilting the see-saw more and more.

- The cycle goes on…

*Of course, if firms can develop and sustainably deploy new “world models” or whatever other form of digital intelligence they may invent before the lemmings, sorry, I mean the investors, freak out and leap off the equities cliff, then maybe we avoid another crash. But again, Capitalism does love a boom-and-bust cycle...

What’s important to take away is that though the fates of Technology and Economy are (increasingly) intertwined, they are not the same thing.

Again, we can make choices.

ASI, SSI, God-in-a-box, uh oh…

Probably the most controversial topic in this field today. With opinions ranging from Eliezer Yudkowsky (“we’re all gonna die”)66ifanyonebuildsit.com to propagandists of benevolent future gods, and everything in between.

I agree with what I believe is a growing faction that we:

- don’t exactly know what’s going to come

- will in our lifetime create a sort of digital intelligence that surpasses ours in many, many important ways.67Take heart! They’ll probably never have some aspects of human intelligence, as analogously argued by Thomas Nagel in his “What Is It Like to Be A Bat?”

rintintin.colorado.edu/~vancecd/phil201/Nagel.pdf - as a human species, have never dealt with an intelligence vastly superior to ours… so of course that should be scary!

Here’s where my bias against most organized religions will emerge: I think we have self-poisoned ourselves with too frequent a parable of a revengeful god that smites us for, like what, eating an apple? Really?

I believe that like many things in our subjective, qualitative, fuzzy world we have a value choice between positivity and negativity. And especially when we are the ones making something because even if we bestow self-propelling coding capabilities upon these systems68Impressive recent example from Karpathy’s own nanochat open-source experiments.

x.com/karpathy/status/2031135152349524125, we are still bestowing it initially.

Many of you, maybe even those at Anthropic, may now think I’m being childishly myopic and dangerously foolish to presume such a “quaint” future.

NO.

Instead I am fiercely and relentlessly optimistic that we can do right.

I know two things to be true about humans:

- The likelihood of us putting the genie back in the bottle is inversely proportional to its usefulness.

- We can learn from history.

We invented mythologies like a treasonous Prometheus gifting us fire because long enough ago just the act of controlling a fire was supernatural to us! And now we teach little camp scouts to make their own.

In 2015, the brilliant Tim Urban published a seminal essay. Back then it blew my mind. Now so much of it proves wildly prescient, including his 10 year timeline to 2025.69“Being at a thousandth in 2015 puts us right on pace to get to an affordable computer by 2025 that rivals the power of the brain.” Ding ding!

waitbutwhy.com/2015/01/artificial-intelligence-revolution-1.html Go (re-)read it. (Part 2 gets far out, but worth it too).

So, sure, this next era will probably be the craziest, hardest, most potent (positively and negatively) transition of our cosmologically-ephemeral ~6M years since evolutionarily splitting off from bonobos. Alright. We humans love a good challenge!

So keep thinking and building and learning because that’s always been our best method to…

Keep evolving.